In logistics, “big data” rarely feels like a strategy. It feels like Tuesday.

A load moves, and a trail of information follows it. Pickup timestamps, appointment updates, GPS pings, EDI messages, emails from a carrier rep, a detention note from a warehouse, a revised ETA, a revised again ETA, a POD, an accessorial, an invoice. None of this is unusual. What’s new is the volume and speed at which it happens across thousands of shipments and dozens of partners.

Teams often tell the same story. They have data everywhere, but answers nowhere. Two dashboards show two different delivery times. Finance has one version of cost. Operations has another. Customer service does not trust either, so they call the carrier. The hidden cost is not the data itself. It’s the time spent reconciling it.

A modern TMS manages big data by doing three things well. It standardizes information, connects it across systems, and turns it into workflow. That is the difference between a control center and a data dump.

The reality of logistics data

Logistics data is messy by nature. It comes from systems built for different purposes and different time horizons.

A warehouse system cares about inventory and dock activity. An ERP cares about orders and accounting. Carriers and brokers send updates through EDI, APIs, portals, and email. Visibility providers translate location signals into shipment events. Each source is “right” in its own context, and still conflicts with the others.

Industry definitions of a TMS keep circling back to the same core point. A TMS exists to plan, execute, and optimize shipments, including booking and tracking through delivery.

The catch is that “tracking” is no longer one event. It is a stream. That stream is the big data problem.

Turning noise into a single shipment story

The most useful thing a TMS does with large volumes of data is surprisingly simple. It forces a shipment to look like a shipment everywhere.

Instead of scattered facts, you get a structured timeline that holds up across teams. Order created. Load built. Carrier tendered. Appointment confirmed. Pickup. In transit milestones. Exceptions. Delivery. POD. Invoice. When the TMS owns that timeline, the organization stops debating what happened and starts deciding what to do next.

This is also why many operations rely on integration layers. A visibility platform often acts as a normalizer, translating inconsistent carrier signals into consistent shipment events and triggers.

A TMS that can consume normalized events and attach them to the right load creates something logistics teams rarely get. A shared, trusted version of reality.

Read more: How TMS Improves On-Time Delivery

Integration is how big data becomes usable

Big data becomes operational only when systems talk to each other without manual translation.

Most TMS implementations live or die based on integration quality. ERP for orders and billing. WMS for readiness and appointments. Carriers through EDI or APIs. Telematics and ELD for location and driver context. Major TMS providers and industry guides consistently highlight API and EDI as core integration methods for eliminating silos and improving report accuracy.

When integration works, three changes show up fast.

Order data enters once. Load planning becomes faster and cleaner. Billing disputes drop because documents and charges tie back to the same load record, not a chain of emails.

When integration fails, the opposite happens. People become the middleware. That is where big data turns into big stress.

Read more: Why Integration Will Be the #1 Priority for TMS Buyers

Filtering matters more than collecting

Plenty of teams have more data than they can use. The teams that look calm are not collecting less. They are filtering better.

A strong TMS does not ask a planner to stare at everything. It organizes information by operational meaning. Lane. facility. carrier. customer. time window. exception type. The point is to see patterns without losing the ability to drill into one load when it matters.

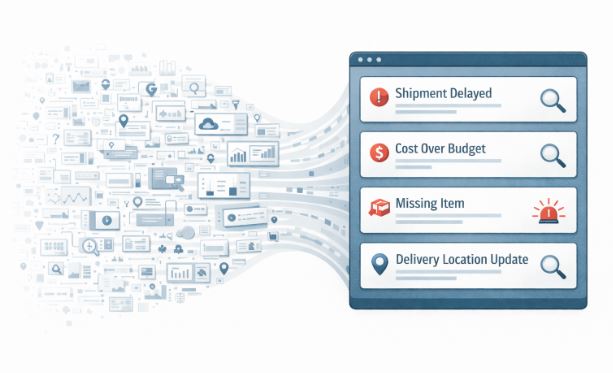

This is where “control tower” thinking has become common. Not as a buzzword, but as a practical need. A TMS or control-tower layer captures real-time updates through API or EDI connections and makes the status usable across roles.

Good filtering is also the cure for dashboard fatigue. If a dashboard does not change what someone does today, it becomes wall art.

From reporting to action

The trend in 2025 and 2026 logistics software is consistent. More systems are built around exception management and workflow, not static reporting.

Big data helps only when it triggers action at the right time. Late pickup risk should route to dispatch, not to a monthly KPI report. A dwell pattern at a facility should drive an appointment rule change, not another meeting. A carrier that consistently misses tight windows should be flagged during tendering, not after QBR season.

This is also where adjacent disciplines like process intelligence have gained traction. The theme is the same: unify data from multiple enterprise systems to see how work actually flows, then remove bottlenecks.

In transportation, the “work” is the shipment lifecycle. A TMS becomes the place where big data turns into operational muscle memory.

Predictive signals without the black box

Logistics teams do not need magic. They need early signals they can trust.

Predictive ETAs, risk flags, and lane trend detection are useful when the inputs are clean and consistent. They fail when data is incomplete or contradictory. That is why big data management starts with fundamentals. Standardized locations. consistent milestone definitions. disciplined carrier onboarding. clear accessorial rules.

Even Gartner’s framing of real-time transportation visibility emphasizes location and status insights into orders once they leave the warehouse.A TMS that manages big data well takes those location and status signals and attaches them to the decisions people make, carrier assignment, appointment changes, customer updates, and cost controls.

Read more: How Poor Data Management Will Hurt Freight Companies

Big data maturity looks boring, and that is the goal

When a TMS is doing its job, big data stops feeling dramatic. No one talks about “data projects.” People talk about fewer surprises.

Customer service trusts ETAs. Finance trusts margin by lane. Operations trusts exception alerts. Leadership trusts weekly performance trends without re-litigating definitions.

That is what big data should do in logistics. It should reduce arguments and increase clarity.

Where FTM fits

FTM is built to manage logistics data as a living stream, not as a monthly report.

It connects planning, execution, carrier performance, visibility signals, documents, and billing into one workflow so teams stop reconciling systems and start managing outcomes. If your operation is growing and the data burden is growing faster, the fix is rarely another spreadsheet or another dashboard. It is a system that can standardize, connect, and operationalize the data you already generate.

Book a demo at FTM